This is the fourth and final part in a series of posts about the networking architecture for Dragon Saddle Melee.

Rendering the Simulation

Now that we’re spitting out a series of game states each frame in Dragon Saddle Melee, we need to translate that into graphics moving on the screen.

Bookkeeping

In the previous section, we talked about needing to keep each frame’s state independent of each other and avoid using global caches. This rule has to be discarded when it comes to rendering. DSM uses Unity and creating and destroying objects isn’t free. The engine isn’t designed to destroy and recreate each object each frame. So, we end up having to rely on a global cache to match object IDs to Unity game objects.

This is pretty straightforward after we put some restrictions on how objects can change from frame to frame. We don’t allow them to fundamentally change their type and we never reuse an ID. This way, we can safely instantiate a new Unity object the first time we detect it in a frame’s state. We can assume that in a future frame the same object isn’t going to suddenly change from being a dragon to being a laser beam.

Interpolation

The most important part of rendering the game state is where to position the objects on the screen. The naive solution would be to grab the position from the most recent state for each object and place the object at that location in the Unity scene. In practice, this ends up creating very jerky movement for all of the objects. This is mainly caused by two things.

First, our physics simulation runs at a tick rate (20 ticks per second) that does not match the rendering frame rate (often 100+ frames per second). Even though we’re rendering much faster than the simulation, we’re losing that benefit. 20 frames per second of effective motion doesn’t look particularly smooth. We want to do some interpolation to smooth that out.

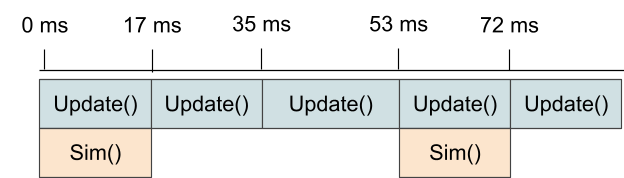

Second, our physics simulation tries to maintain a constant tick rate, but that doesn’t mean that according to a clock on the wall it will run one frame precisely every 50ms. In practice, every trip through the game’s loop -- in Unity each time Update() is called -- the simulation is accumulating the time since the last simulation tick. When that accumulator exceeds 50ms, it's time to run the simulation again. In practice that means running the simulation at, for example, an accumulator value of 53ms and then carrying over 3ms to the accumulator to start waiting for the next simulation tick. This difference between our average tick rate and an individual frame’s tick-to-tick time causes jitter that is noticeable.

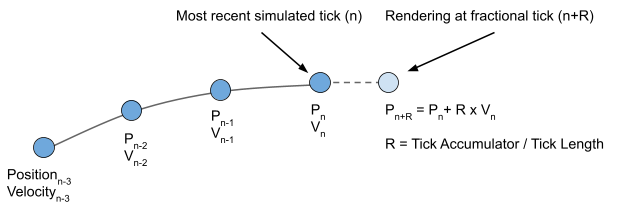

The solution, of course, is to do some interpolation on each rendering update that is based on how far into the next simulation tick we are. The simulation tick time accumulator previously mentioned provides that measurement. The question then becomes what two states to interpolate between. We can either interpolate forward from the most recent tick or backwards to between the second most recent.

If we interpolate forward, we could use the current position, the current velocity, and the current accumulator to come up with an interpolation like:

Position_Renderedn = Position_Simn + Velocity_Simn x Accumulator / Tick_Length

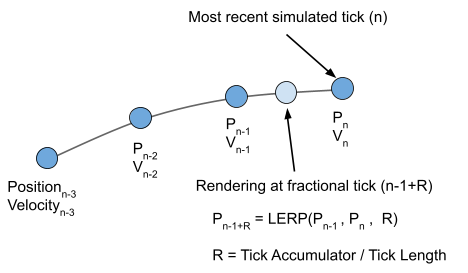

This method has some issues. First, the real physics simulation is more complicated than this formula. Velocity is not fixed over a simulation tick -- it is being affected by forces on the object. To get those forces to correctly determine velocity, we’d need to know the inputs for the next frame. We don’t know those (even for our locally controlled object), so we’d have to guess. This introduces a whole new layer of mis-prediction at the rendering layer beyond what we already have in the simulation part of the game. In practice, I think the results turn out better when we again introduce a slight bit more latency to achieve more consistent results. We’ll interpolate between the second most recent frame and the most recent frame:

Position_Renderedn =

LERP( Position_Simn-1 , Position_Simn , Accumulator / Tick_Length )

Where LERP(...) is the linear interpolation function. This ends up producing much smoother results and is what DSM uses. This could be improved further by using something better than linear interpolation because we know the first and second derivatives of position at both of those states.

Mis-prediction

Unfortunately, we still have to deal with the problem of mis-predicting the location of an object in our local simulation. Our simulation is chugging along, simulating frames at the tick rate with the best information available. For our locally controlled object, that information is very good because of the command queue. For server controlled NPC objects, that information is also very good because the server is sending us in advance future commands for those objects. For other remote player controlled objects, our local simulation just has to guess until we receive the real command for that tick from the server. Then we rollback and simulate all the frames up to the present with the new information.

The problem that emerges is that this new definitive data about a previous frame will cascade forward to impact the frame we’re currently rendering. We could just carry on and jump the graphical representation to the best position we have for the current frame. That actually doesn’t look too bad in many cases. However, a game design choice in DSM is that the dragons flap their wings when the player presses the flap button. This flapping causes a sudden upward force on the dragon that lasts a few frames. Unfortunately, sudden forces caused by player input is the worst case scenario for mis-prediction which leads to bigger graphical jumps in the object’s position.

Again, the solution that DSM uses is to interpolate away this mis-prediction error. We track where we’ve rendered an object in the previous frame and the error between the rendered position and our simulated position. Then we reduce that error by a factor over time. This gets the object back to its most accurate position quickly, because it’s important for the player to have an accurate representation of the other object's positions. Plus, it provides some smoothing so that the game looks better.

This part of the interpolation gets computed every time we run a new simulation tick:

Position_Errorn-1 = Position_Simn-1 - Position_Renderedn-1

Position_Renderedn = Position_Simn - Interp_Factor x Position_Errorn-1

And, this part gets computed every time we render a frame:

Current_Position_Rendered =

LERP( Position_Renderedn-1 , Position_Renderedn , Accumulator / Tick_Length )

Interp_Factor is a value between 0 and 1.0 that determines how fast we reduce the visual error of the object’s position. DSM currently uses a value of 0.75.

Also, DSM detects position errors above a certain threshold and just jumps the object to the correct position. In the case of a big bout of packet-loss or a dragon uses a mechanic that warps him across the map, it looks better to just jump the dragon than perform an interpolation across the whole screen in a handful of frames.

Animation Problems

A similar problem arises with object animations. For example, when a player pushes the flap button for his dragon, it plays an animation of the dragon flapping its wings. By the time a client finds out that another remote player is flapping his wings, the flapping animation has already progressed through a few frames. So we need to start playing that animation but jump ahead a little to the current frame of that animation. To smooth this, we repeat the same error trick we used with the rendered position: track an error value that represents the difference between the frame of the animation we are showing and the frame the simulation says we’re actually at. Then, reduce that error rapidly by a factor over time.

One other implementation gotcha with this is the Unity animation system. The default Unity animation system is not really set up to easily have this per-frame level control over an animation. It’s more designed to have a trigger that causes a certain animation to play and possibly blend a few animations together (like a character walking and firing a gun). I found a better solution using the Unity asset Animancer Pro. Big thanks to them.

Animation Tricks

DSM, like many games, also uses animation to hide latency. This is done throughout the game from the flapping animation to a dragon’s rider firing a laser pistol. The trick is pretty much the same: start playing the animation immediately, but have the real effect happen several frames into the animation. For example, have the dragon rider point the pistol for a few frames, then have the shot fire and pistol recoil. Also for example, have the dragon wings move up into position to flap for a couple of frames before they swoop down and the physics forces are actually applied to the object. This slight delay still gives immediate feedback to the local player performing an action because the animation begins immediately. But, it masks the latency of an action performed by another remote player. When the local player’s client gets informed that the other player has performed an action, his client plays through the beginning of the animation quicker to catch up before the action itself is performed.

Sound Effects

Another problem area with representing the simulation on a client’s computer is playing sound effects. We can’t just look at what happened in the current frame and play sound effects accordingly. When we roll back to re-simulate previous frames based on definitive information received from the server, we may discover that a dragon rider fired a pistol several frames before the one we’re currently rendering. If our client is only generating sound effects based on the events in the most recent frame, we’d miss the cue to play the shooting sound.

To catch the events in re-simulated frames that generate sound effects, we need to keep track of which frames have been re-simulated. Then, the client should check that set of re-simulated frames for events that would generate sounds and play them. However, since definitive information is constantly arriving and our client is constantly rolling back and re-simulating old frames, events that generated sounds will be encountered many times by the client. When the client discovers another player fired a gun on frame N, we only want to play that sound effect once instead of every time we re-simulated frame N.

The solution is to keep a cache of events and associated sound effects. In DSM, each event that would generate a sound effect generates a hash value. The hash value is based on unique parameters of the event. For example, Player A uses Item X. Or Player A collides with Player B. When an event occurs within a re-simulated frame, the client checks the cache to see if it’s already playing a sound effect for that event. If not, start playing it and add the event to the cache. If it’s already playing, don’t do anything. Then when a sound effect finishes, remove the event from the cache. It’s important to not allow the simulation tick number to be a factor in an event’s hash. If our client determines that Player A and Player B collide on frame N, but a rollback re-simulation determines they actually collided on frame N+1, we still don’t want to repeat the sound effect.

Another problem arises when our client simulates an event but a rollback re-simulation shows that the event did not occur. In most cases in DSM, we just let the sound effect finish playing. It gets too hard to tell if the event didn’t occur on the exact frame we initially predicted and will occur shortly after -- in this case, we want the sound effect to continue playing -- or, that the event will never occur at all -- in that case, we want to stop it. The problems from this are mitigated by keeping sound effects short. DSM does have an exception to this rule in certain cases of long playing sound effects. For example, a dragon can activate a jetpack on his dragon and trigger an effect that lasts for many seconds. If we detect that the event didn’t ever occur, we stop the whole jetpack effect including the sound effect.

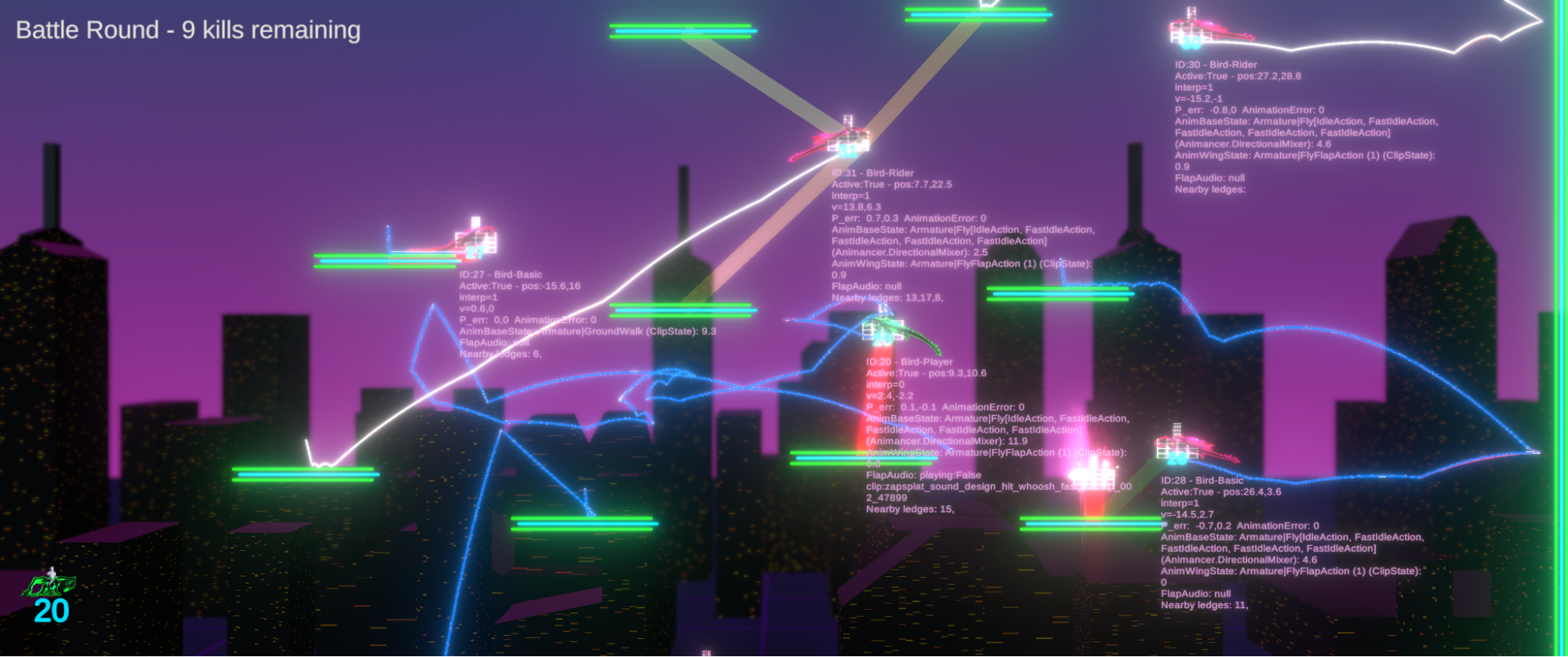

Debugging Tools

One last note is that developing debugging tools into the game paid huge dividends when investigating why things weren’t working as intended. DSM has debugging displays that show where an object was first rendered, where the simulation currently shows it, and where the last definitive state from the server shows it. It shows the known command queues from objects and lots of debugging text related to gameplay flags and counters on objects. The debug view can also step backwards in time to show the game state in previous frames. I only wish that I’d implemented some of these tools sooner and more completely. It would have saved a lot of debugging time in the end.

Conclusion

Implementing rollback networking in Dragon Saddle Melee was a great, challenging learning experience. Hopefully I’ve been able to at least pass on a few useful nuggets in this blog. If you’re new to it like I was and decide to also throw yourself into the deep end, I highly recommend checking out the links in the Resources section at the beginning.

Chris

Developer of Dragon Saddle Melee

Co-Founder of Main Tank Software

March 10th, 2022